Edition 16 | April 2026

What's Brewing in AI

Is Coding Dead, or Does It Matter More Than Ever?

And What Does This Mean for Schools?

📋 In This Issue - Click any topic to jump directly to that section

Jensen Huang says stop coding. Andrew Ng says that's the worst advice ever. The debate is about the future of work — but schools need to pay attention.

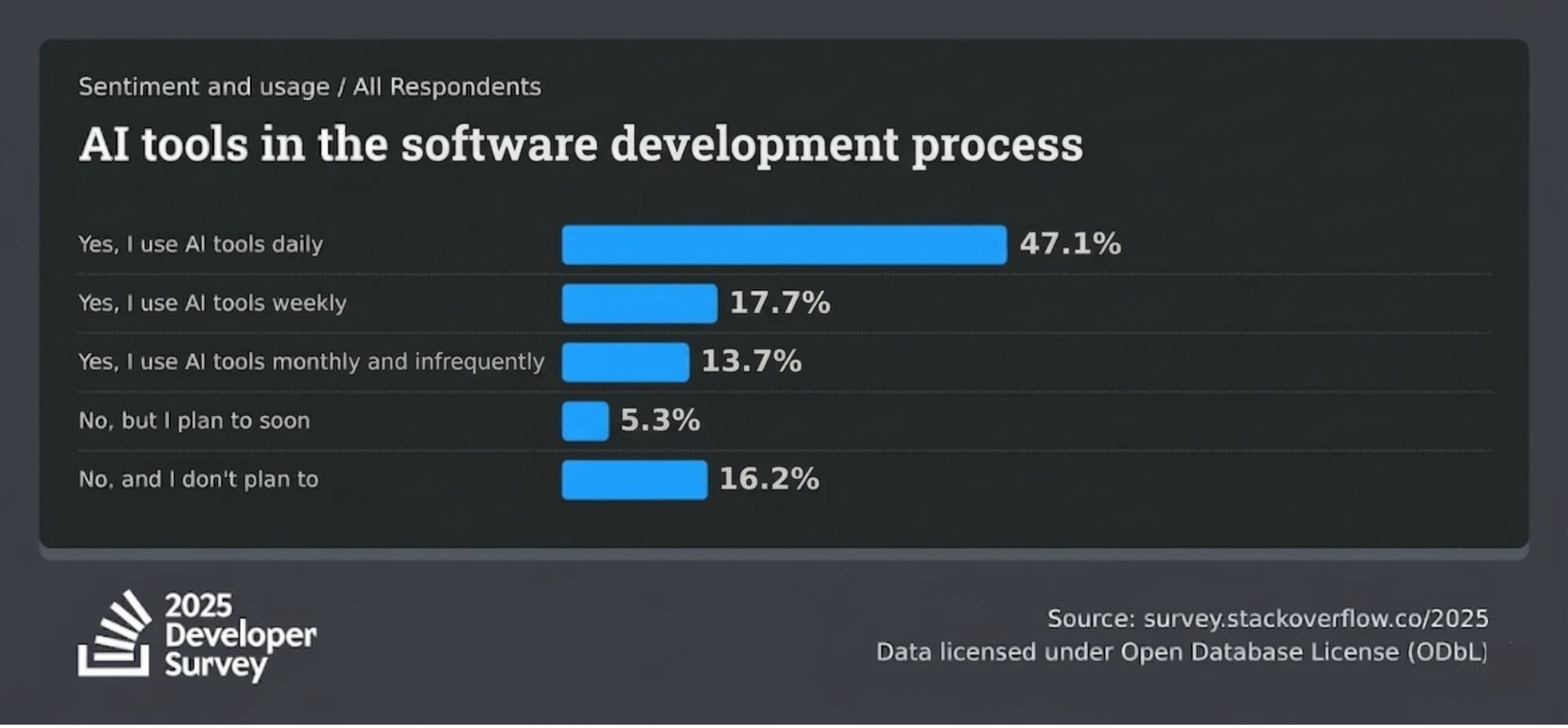

There's a fight happening right now in the tech industry, and it's about whether coding — as a professional skill — still matters. According to the Stack Overflow Developer Survey 2025, 84% of developers use or plan to use AI tools in their workflow—79% already use them, while 5% plan to use them. This is up from 70% just two years ago. But only 29% trust the output. Adoption is racing ahead; confidence in the results is not.

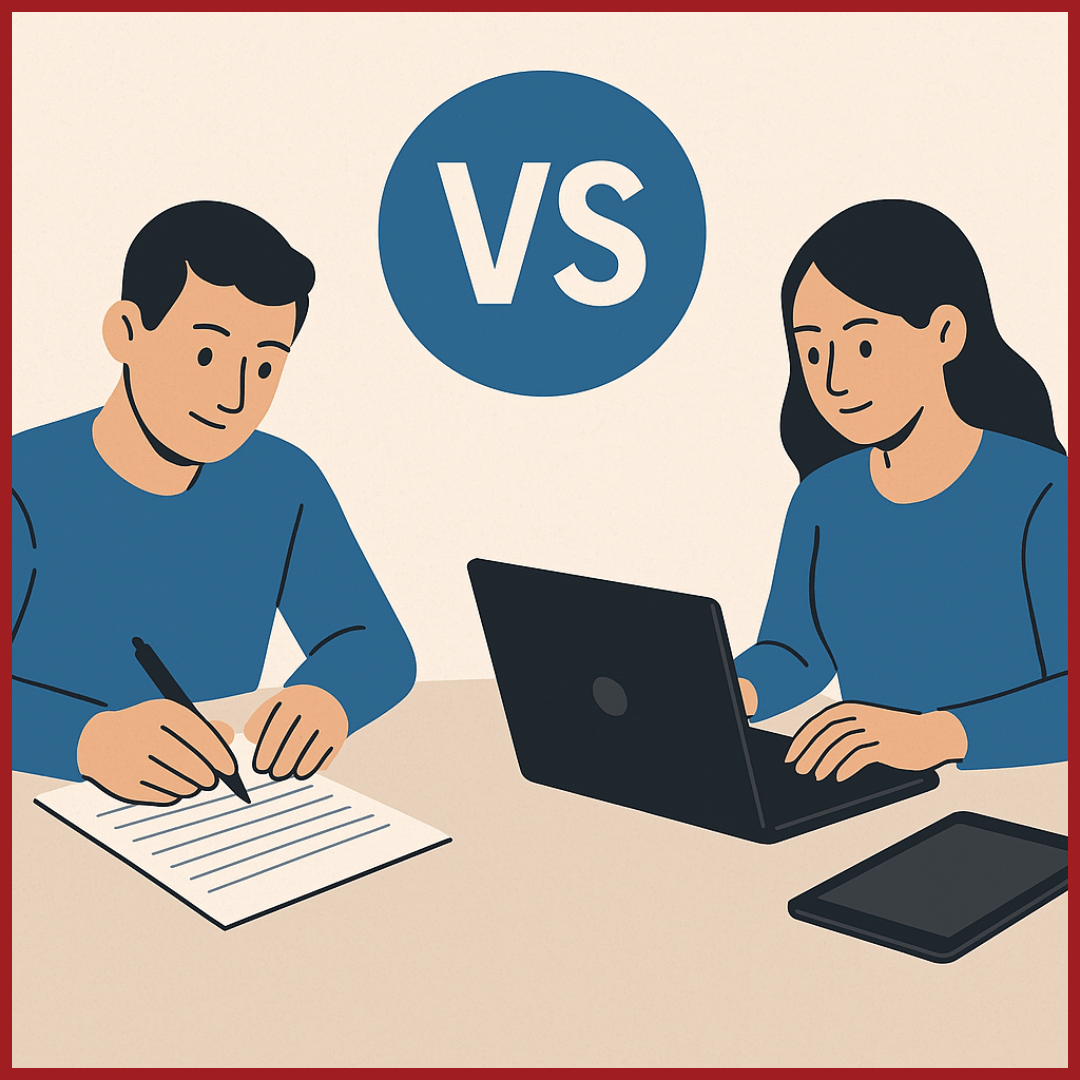

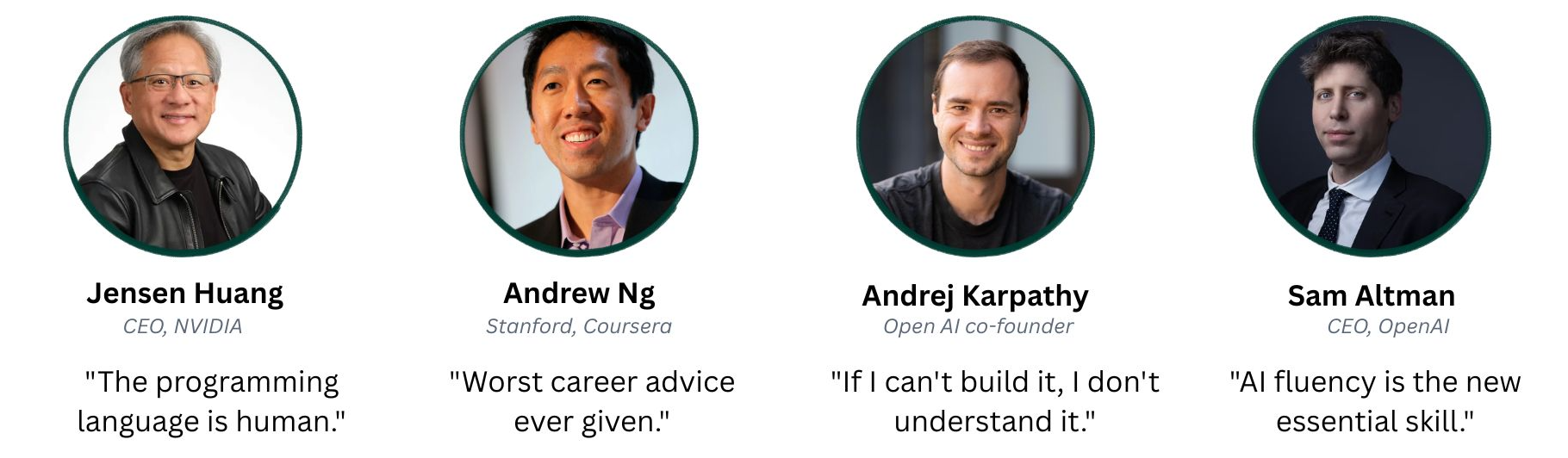

On one side, Jensen Huang — the CEO of NVIDIA — stood on stage at the World Government Summit and told people to stop learning to code. AI will handle that. Learn biology, farming, education — anything where you can build real domain expertise. The programming language of the future, he said, is plain English.

On the other side, Andrew Ng — the Stanford professor who co-founded Coursera and helped build Google's AI division — has been pushing back hard. Telling people not to learn programming, he said, will go down as "some of the worst career advice ever given."

👥 The voices in the debate

What they're arguing about matters for how we prepare children for the world they'll actually live in.

What the tech leaders are actually saying?

Jensen Huang isn't saying the thinking behind programming doesn't matter. He's saying that memorising Python syntax is becoming less important because AI can now turn plain English instructions into working code. His advice is aimed at professionals: spend your time building expertise in a field where you'll use AI, not learning to write code by hand.

💬 Jensen Huang

"It is our job to create computing technology such that nobody has to program. And that the programming language is human."

World Government Summit, Dubai, 2024

Andrew Ng's counter is also aimed at the professional world. Every time tools have made coding more accessible, the number of people who need coding skills has grown, not shrunk. AI-assisted coding makes now a better time to learn programming, not a worse one.

💬 Andrew Ng

"Statements discouraging people from learning to code are harmful!"

LinkedIn, March 2025

Andrej Karpathy — one of the original co-founders of OpenAI, former head of AI at Tesla — is harder to pin down. He coined the term "vibe coding" and does it himself daily. But his personal rule remains: "If I can't build it, I don't understand it." AI coding tools work well for routine tasks but fail at novel, complex problems.

Sam Altman, CEO of OpenAI, recently posted a tweet thanking developers for the work they used to do writing code "character-by-character" — and developers did not take it well. Dario Amodei, CEO of Anthropic, has estimated that AI could soon be generating 90% of all code written by software teams.

Matt Welsh, former Harvard CS professor, has gone furthest: coding is a job that robots will do. Meanwhile, Patrick Moorhead, a long-time tech analyst, offers a historical counterpoint: "For over 30 years, I've heard 'XYZ will kill coding' yet we still don't have enough programmers."

How fast is this happening?

Fast. In early 2024, fewer than 14% of enterprise engineers used AI coding assistants. By 2025, that number hit 84% according to Stack Overflow. Gartner projects 90% by 2028. GitHub reports that 46% of all code written by Copilot users is now AI-generated, and developers using these tools complete tasks 55% faster. Cursor, an AI coding tool that barely existed two years ago, saw its revenue run rate double in just three months—leaping from $1 billion in late 2025 to over $2 billion by March 2026.

How fast is AI taking over the act of writing code?

55%

faster task completion with AI coding assistants

Sources: Gartner (2025) · Stack Overflow Developer Survey (2025) · GitHub/Accenture (2024) · Anysphere (2026)

So what does any of this have to do with schools?

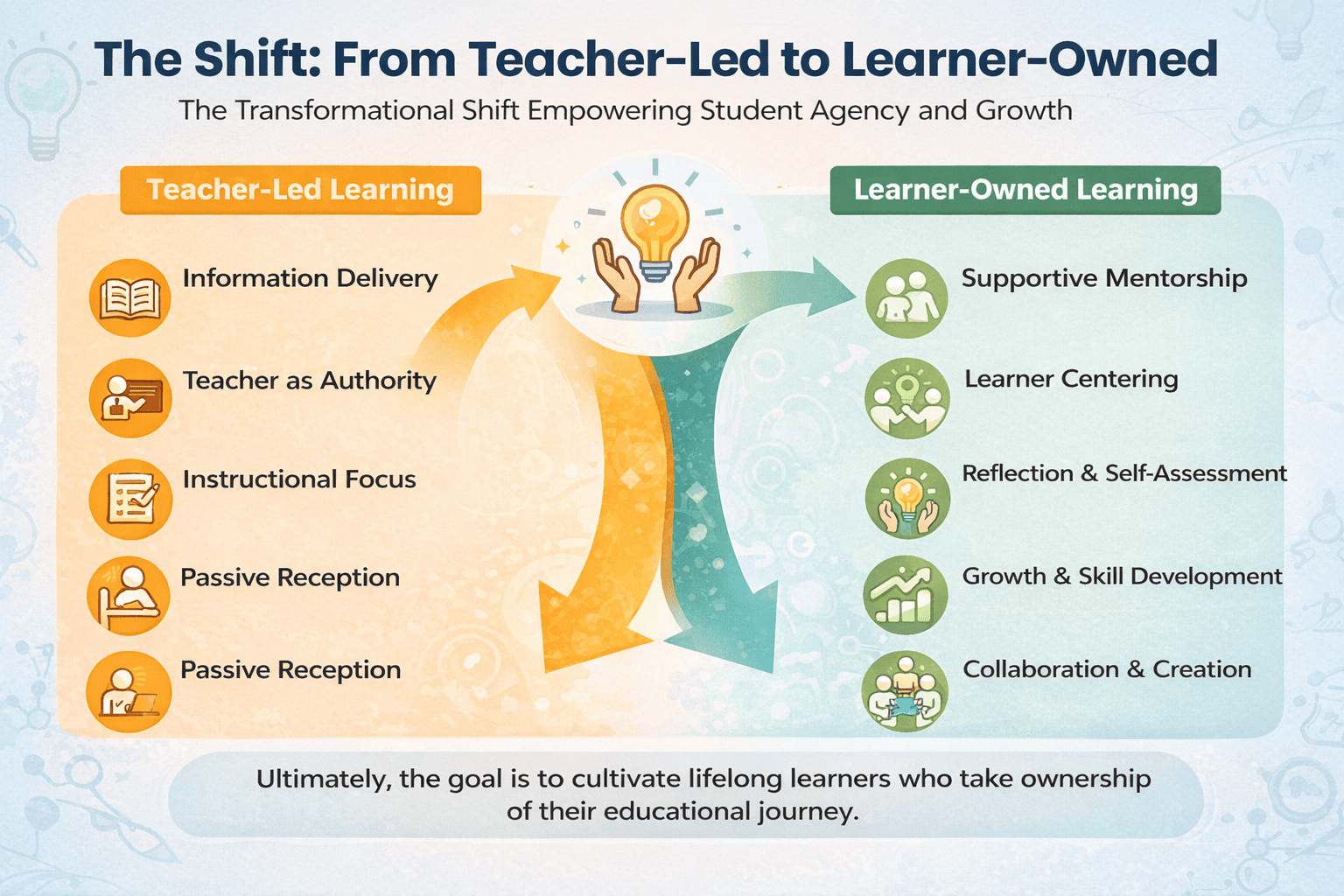

If AI can write code, what's the educational value of teaching children to code? Was it ever about the code itself, or was it about the thinking? And if it was about the thinking, has that thinking become more important or less?

Both camps actually point toward the same underlying skills — even as they disagree about the professional relevance of writing code.

When Huang says "learn domain expertise and let AI handle the code," he's assuming the person using AI can think clearly about what they want, decompose a problem into parts, evaluate whether the AI's output is correct, and understand the ethical implications of what they're building. Those are all skills that need to be developed somewhere.

When Ng says "learn to code, it's more important than ever," he's not talking about syntax memorisation. He's talking about the way of thinking that coding teaches — structured reasoning, system-level understanding, the ability to debug not just programs but ideas.

When Karpathy says "if I can't build it, I don't understand it," he's saying: you need to know the basics before you can tell AI what to do.

All three are describing a person who can think computationally, reason about data, and understand how AI systems work. They just disagree about whether writing professional code is the right way to get there.

| 💡 |

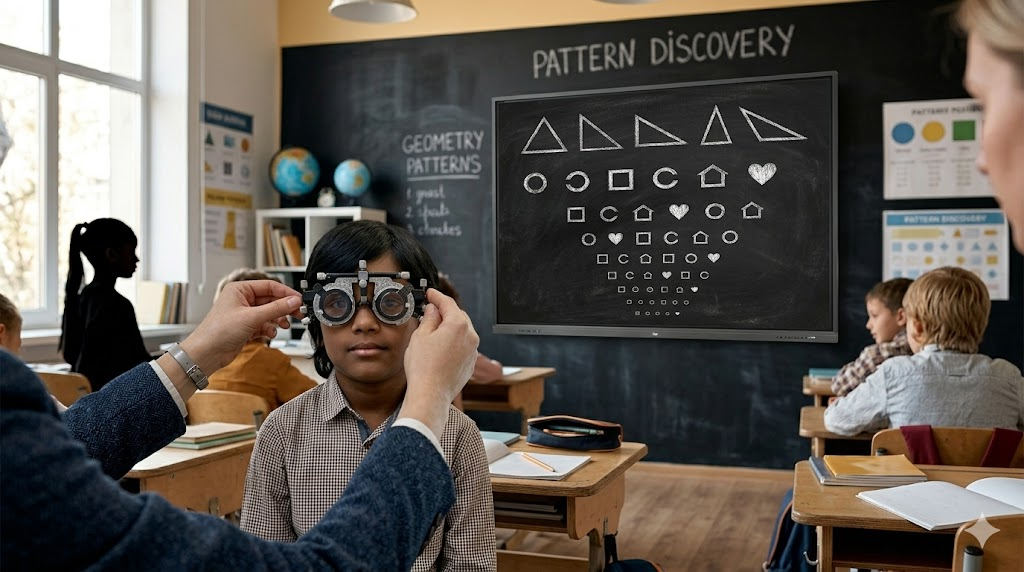

For schools, this lands in a simple place. A 10-year-old writing a block-coding program to make a character navigate a maze isn't being trained as a software engineer. They're practising decomposition, sequencing, debugging, and problem-solving — coding just happens to be how they do it. |

Six capabilities that show up on every side of this debate

Whether you read the workforce debate or the education researchers who are talking about children, the same six capabilities come up again and again.

| 🧩 |

Decomposition Breaking a big problem into smaller ones. When a child codes a simple game, they learn to split "character moves and jumps over obstacles" into movement, input, collision, scoring. A doctor diagnosing a patient does the same thing. So does anyone directing AI — because AI needs clear, decomposed instructions. A person who tells AI "make me an app" gets junk. A person who says "I need a screen with a filterable list of categorised items" gets something useful. The difference is decomposition, and you can teach it from age six. |

| 🔍 |

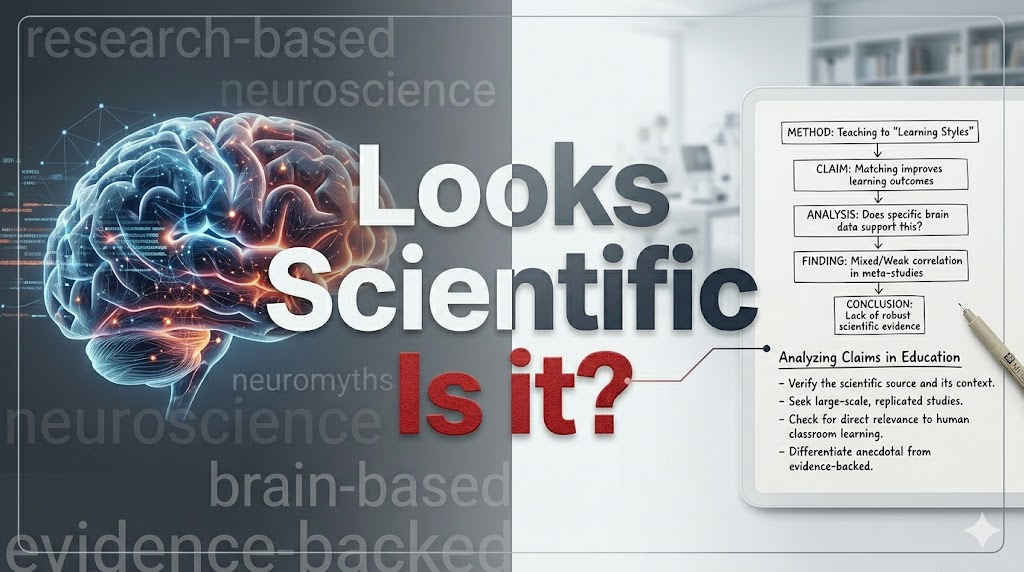

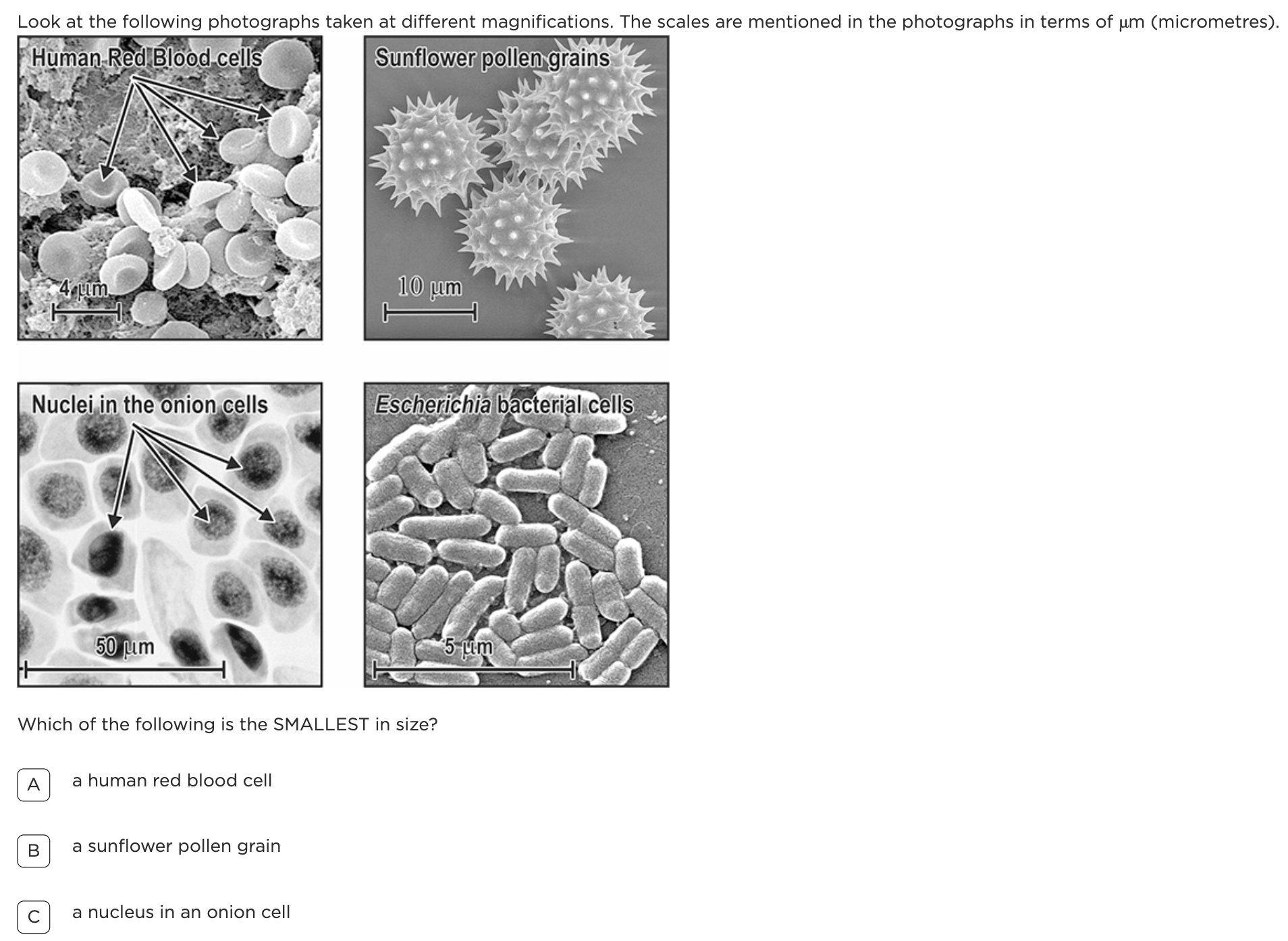

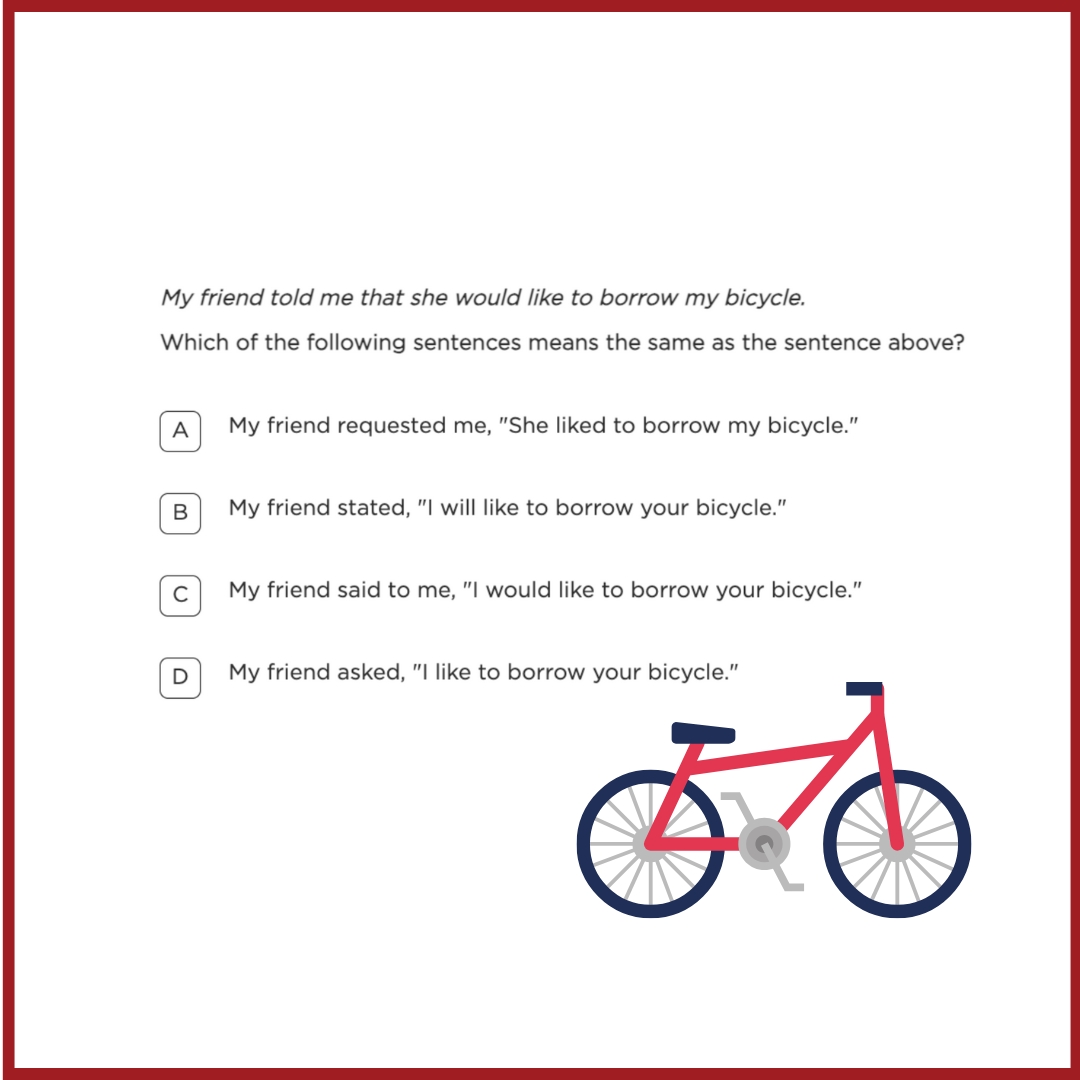

Pattern recognition A "loop" in coding is the same idea as a repeating chorus in music, a multiplication table in maths, or a daily routine. Once children start seeing these structural similarities, they start understanding how AI works — because AI runs on patterns too. And a child who understands patterns can also recognise when a pattern is misleading or biased. |

| 📊 |

Data reasoning "Our product is rated 4.8 stars." Rated by whom? How many people? What were they asked? "AI says this candidate is the best fit." Best fit according to what data? Whose experiences are missing? Every major AI system makes decisions based on data. Children who can't ask these questions will simply accept whatever the algorithm tells them. |

| 🤖 |

AI literacy Most people don't know that ChatGPT doesn't "know" anything — it predicts the next likely word based on patterns in its training data. An AI image generator doesn't "imagine" — it stitches together pixels based on what it's seen before. A child who understands this will ask better questions, catch confident-sounding wrong answers, and understand why AI can write a poem but can't tell you if the poem is any good. Sam Altman said his children will grow up "vastly more capable" not because AI makes them smarter, but because they'll have fluency with AI tools. Fluency, not dependency. |

Look at what employers told the World Economic Forum they'll need most by 2030:

AI & big data top the list — but notice what else is here: creative thinking, resilience, curiosity, analytical thinking. The skills both camps in this debate take for granted. Source: World Economic Forum, Future of Jobs Report 2025

| ⚖️ |

Ethical reasoning An AI system decides which students get admission to a competitive school. It recommends students from wealthier areas at twice the rate. The AI isn't "trying" to be unfair — it learned from historical data that already had the bias baked in. Is the AI working correctly? What should be done? There's no answer you can look up. It requires reasoning about values and consequences — and this kind of thinking matters more as AI takes on more decisions in healthcare, hiring, and education. |

| 🎨 |

Creative confidence with technology The goal is for young people to be creators of technology, not just consumers. When a child writes a program that makes a character dance on screen, they're learning that technology is something they can shape — that the apps they use were made by people who made choices that could have gone differently. That's why coding belongs in the school curriculum — not as career training, but as a way of building this mindset. |

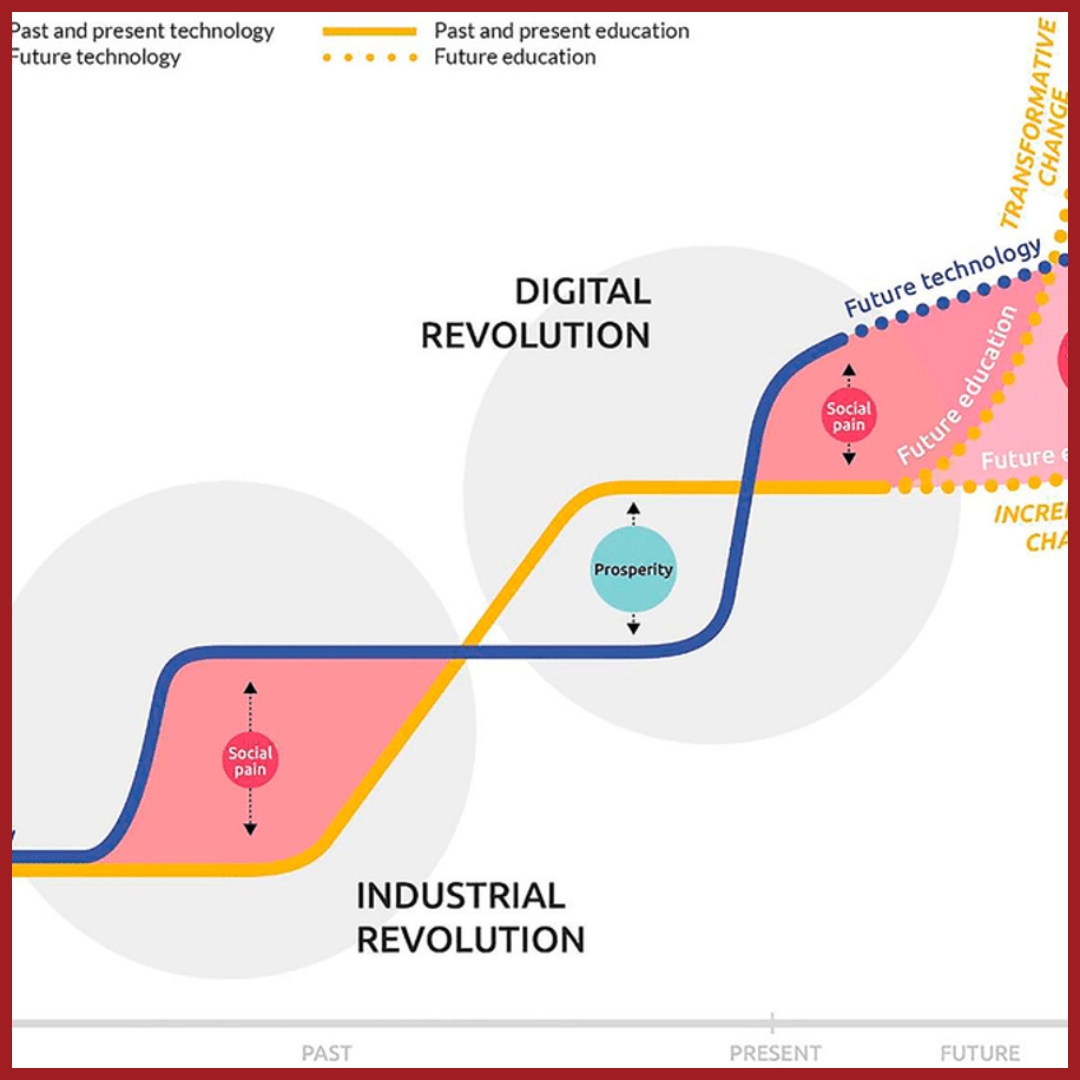

Don't wait for skills to trickle down

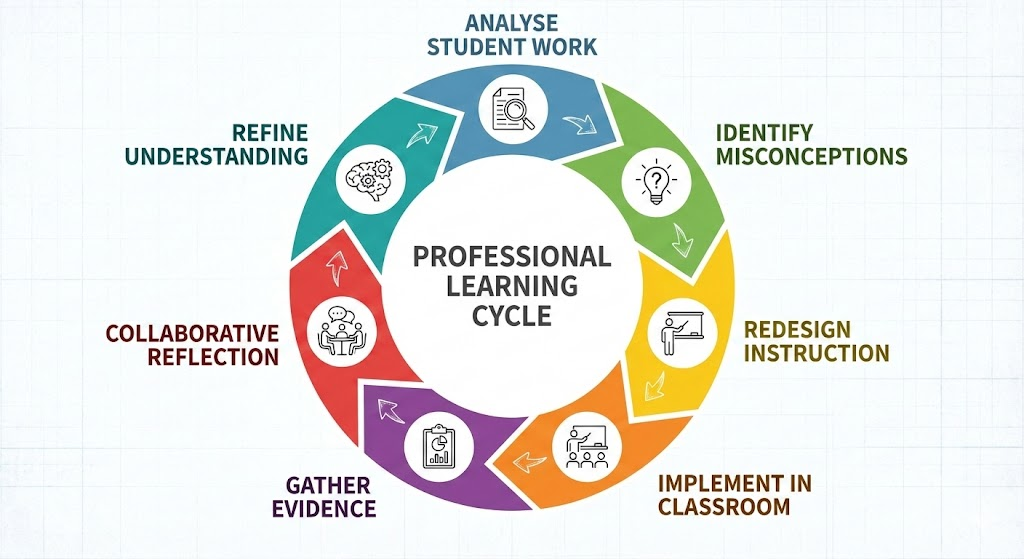

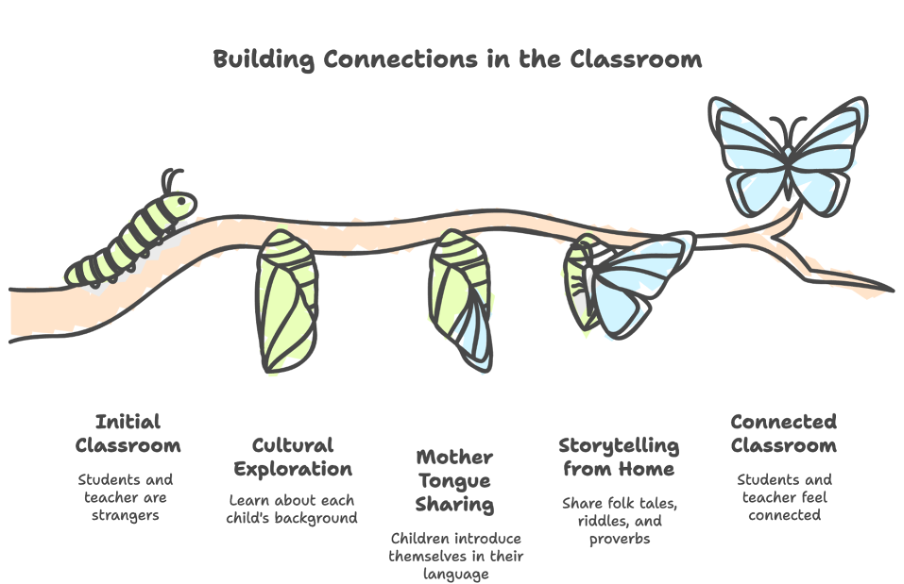

Most schools today teach technology the way the industry learned it: start with unplugged activities, move to block coding, then text coding, and only after all that — maybe in senior secondary — introduce AI. The problem is that this means schools are always years behind. By the time AI shows up in a primary school classroom, the workplace has already moved on.

We think this is backwards. And we're doing something about it.

At Educational Initiatives, we've been building a curriculum for grades 1–8 that runs logic, coding, data, AI, critical thinking, and digital literacy all at once — 10 parallel strands from grade 1, not a slow drip from grade 9. A child on our platform encounters AI Foundations and Data Analysis from the very start. By grade 8, they've been working alongside AI for years. They're not surprised by it. They understand it.

We teach coding for the same reason we teach maths — not because every child will become a mathematician, but because it's a playground for logic, tinkering, and building things. From grade 5, AI-assisted coding enters the picture, so children learn to write code and direct AI to write code. They learn to prompt well, evaluate output, and understand what's happening behind the response. And honestly? We love watching kids light up when they build something that works — whether they typed the code or told AI to write it.

| 💡 |

We don't think you have to choose between coding and AI. You teach the thinking that makes both useful — and you start early. |

The calculator analogy — and why it doesn't hold

People sometimes compare AI coding tools to calculators. We don't make kids do long division by hand anymore, so why make them learn to code when AI can do it?

But we do still teach children how multiplication works, what division means, and how to estimate whether an answer is reasonable. The calculator handles the computation; the human provides the understanding. A child who uses a calculator without understanding multiplication will happily accept "7 × 8 = 92" if they press the wrong key. A child who understands multiplication will immediately know something is off.

AI coding tools work the same way, but the stakes are higher. A calculator gives you a wrong number. AI gives you a wrong system — one that looks completely functional and might run for months before anyone notices it's quietly producing harmful results.

And the jobs picture is already shifting:

📉 The displacement picture

57% |

of US work hours automatable with current technology Up from 30% in 2023 — nearly doubled in two years Source: McKinsey Global Institute, "Agents, Robots, and Us," November 2025 |

↓27% |

"Computer programmer" roles in the US: down 27% in 2 years + further 6% decline projected through 2034 Source: US Bureau of Labor Statistics, via Marina Wyss / Data Science Collective, 2026 |

So, what's the verdict?

You can't teach coding for coding's sake — not anymore. The "learn to code and get a job" pitch was always too narrow, and AI has made it obsolete. But that doesn't mean coding leaves the classroom. It means coding stays for the same reason maths stays: as a playground for learning logic, for tinkering, for building things and seeing how they break. Not the way you learn a new language. The way you learn to think.

The ICT period in most schools today teaches children to use word processors, spreadsheets, and presentation software — tools that were relevant twenty years ago. The question is whether schools can use that same time slot to build the kind of thinking that every expert in this debate agrees will matter. Decomposition. Data reasoning. AI literacy. The confidence to create with technology, not just consume it.

| 🚀 |

We believe this is the future of the ICT classroom. And we're excited to be building it. |

Sources: Stack Overflow Developer Survey (2025) · Gartner Magic Quadrant for AI Code Assistants (2025) · GitHub/Accenture Copilot Research (2024) · McKinsey Global Institute (2025) · WEF Future of Jobs Report (2025) · US Bureau of Labor Statistics (2024) · Anysphere/Cursor (2026)

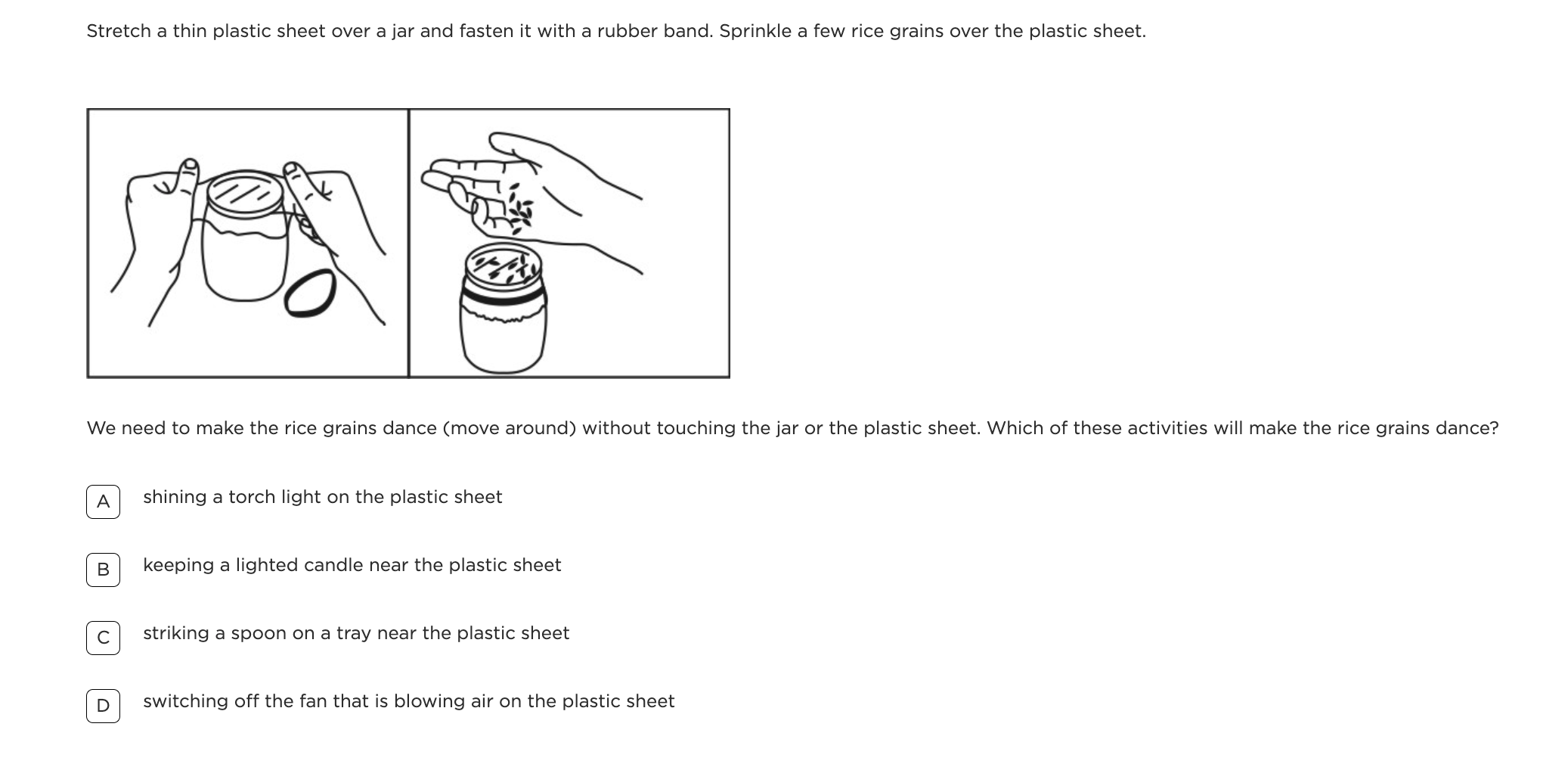

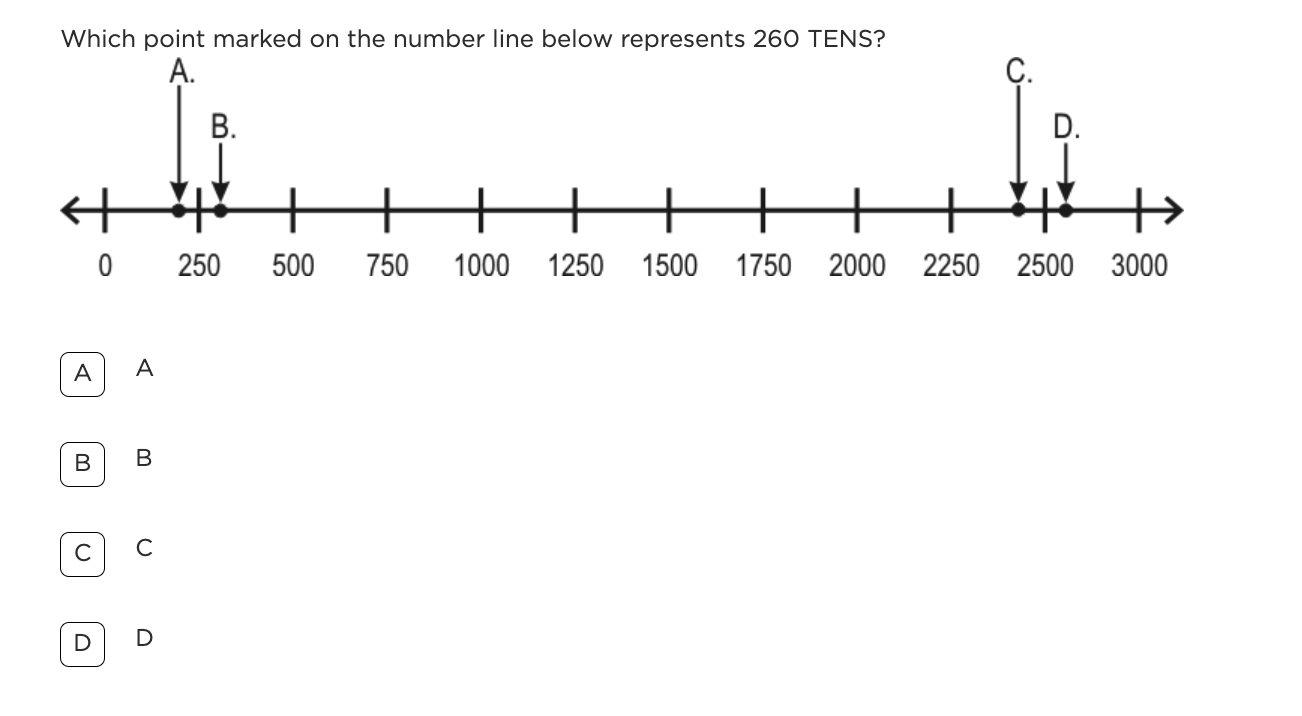

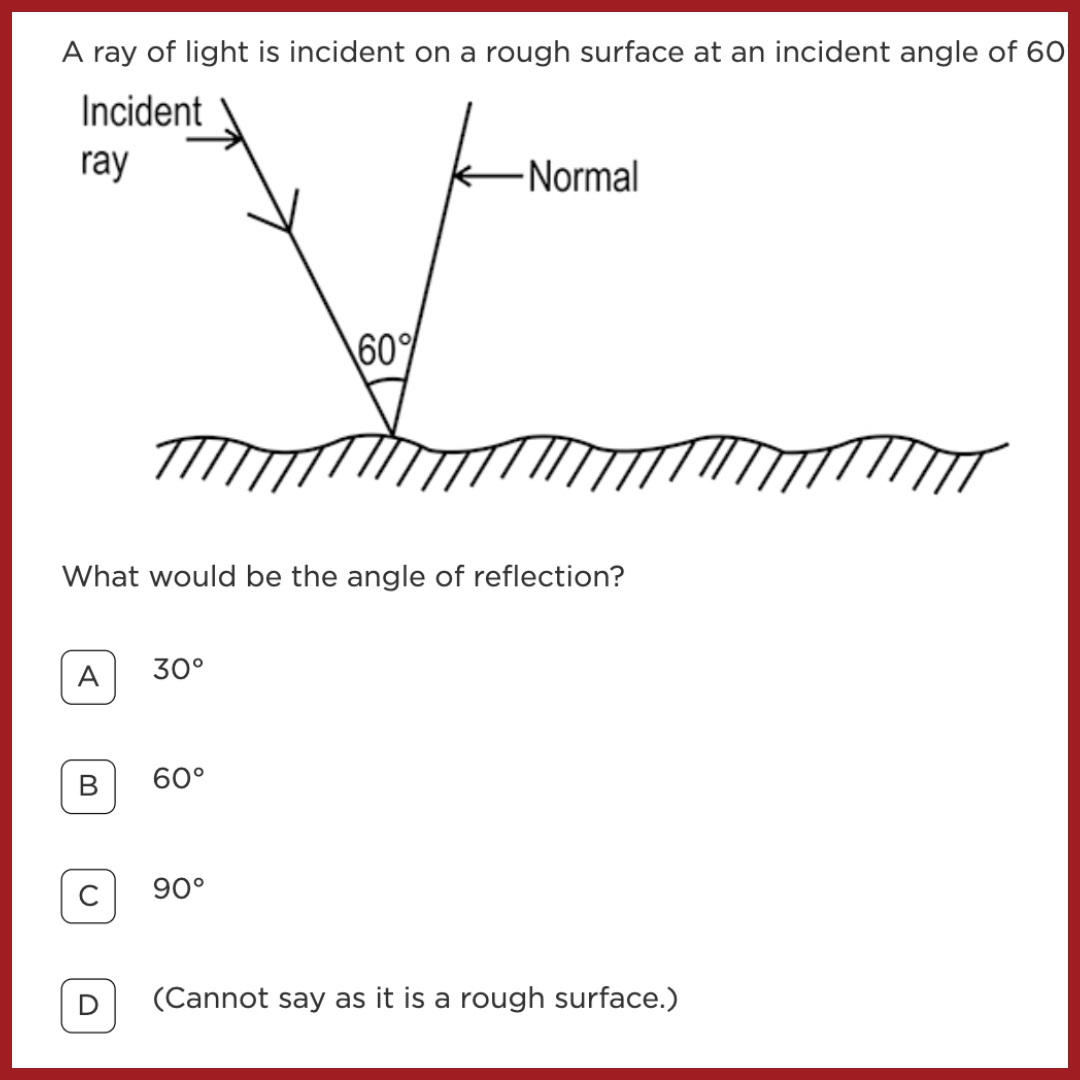

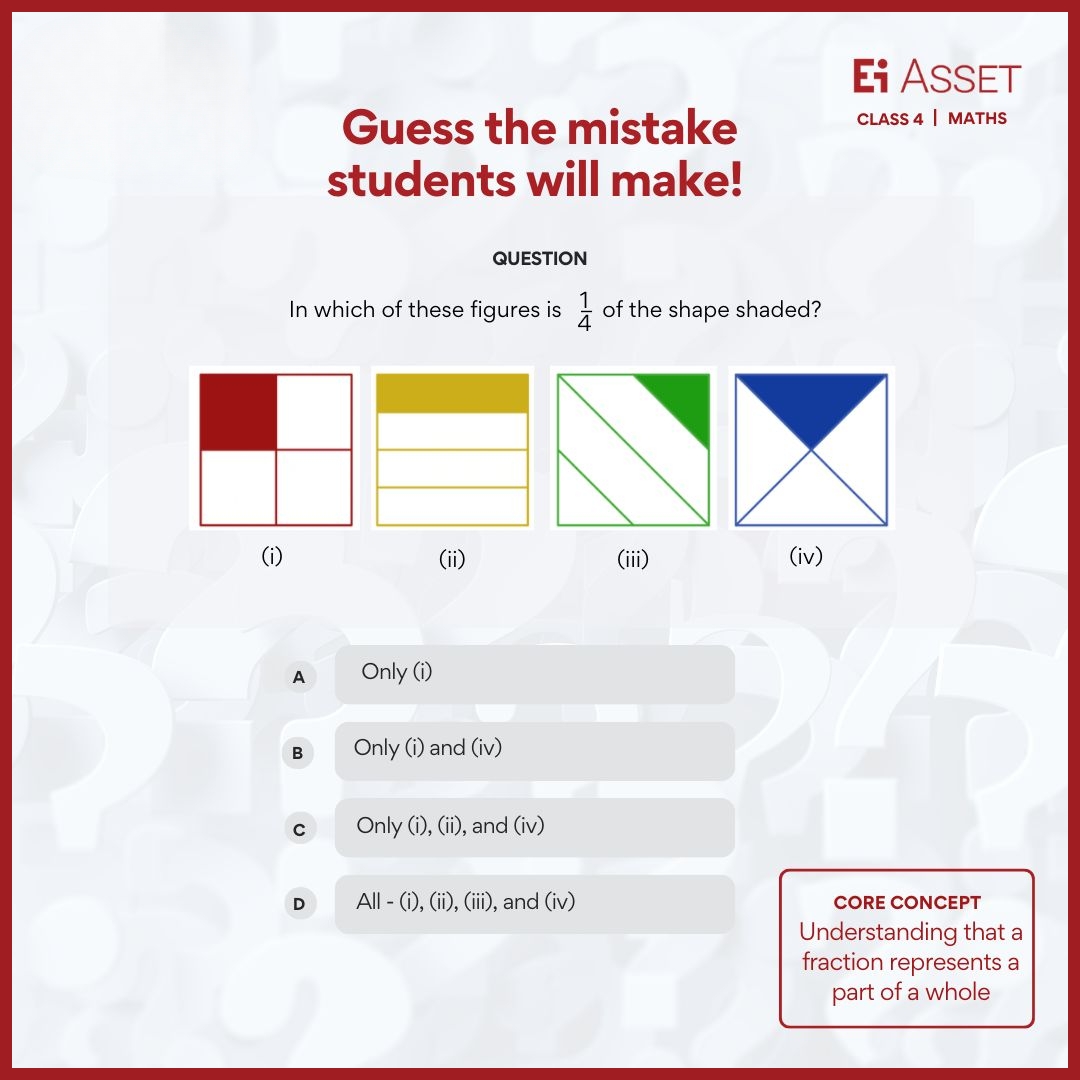

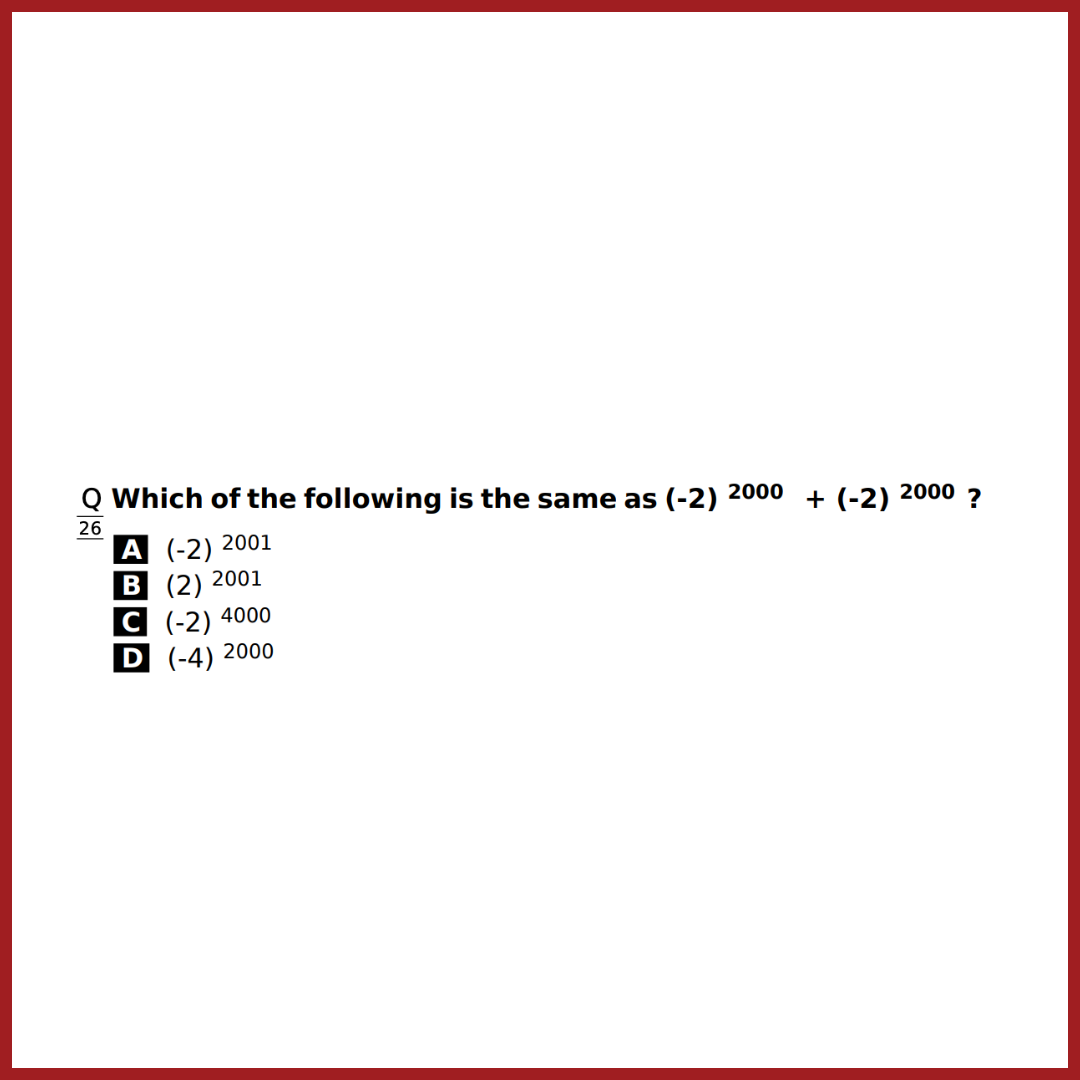

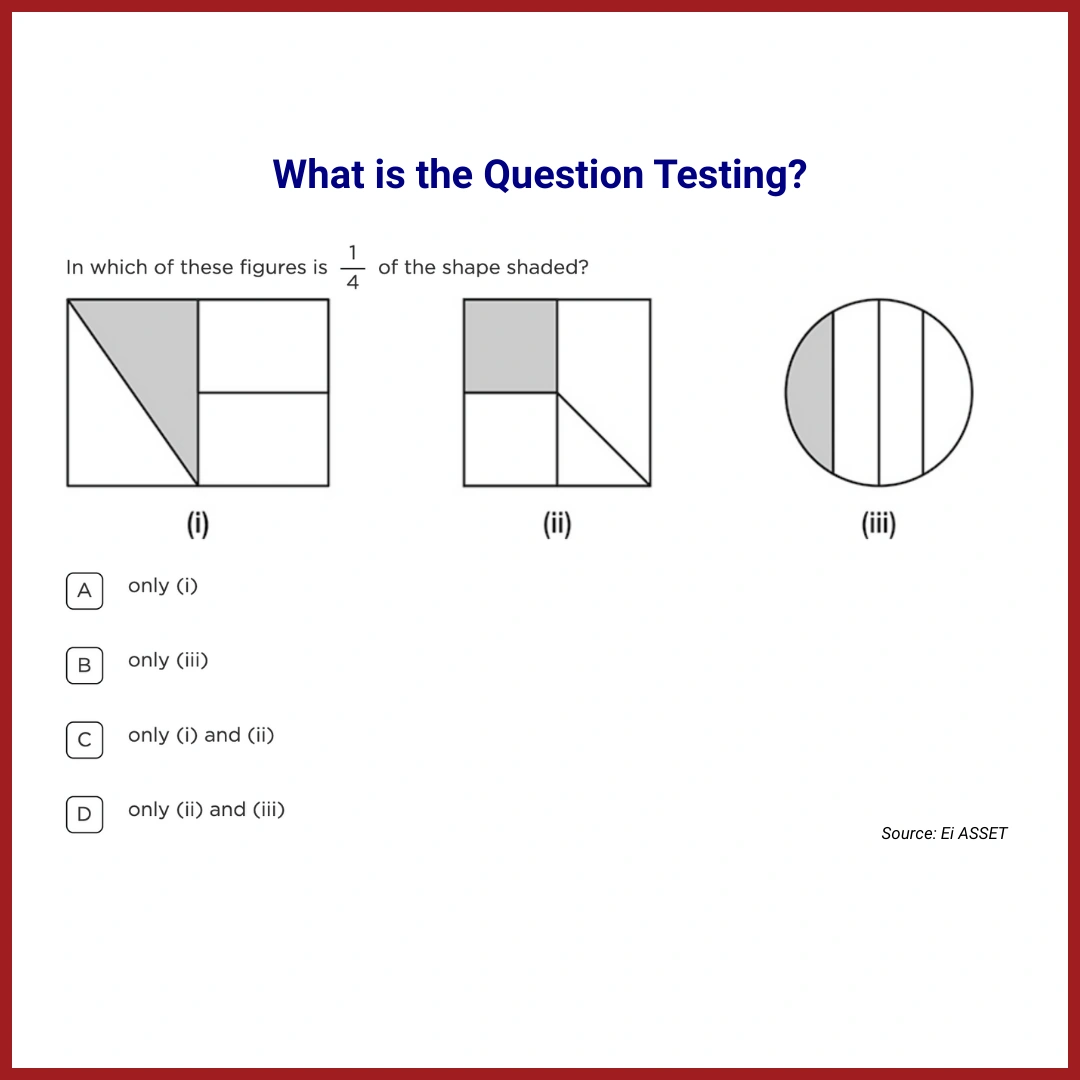

🔲 Try this — from Ei ASSET AI & Digital Thinking

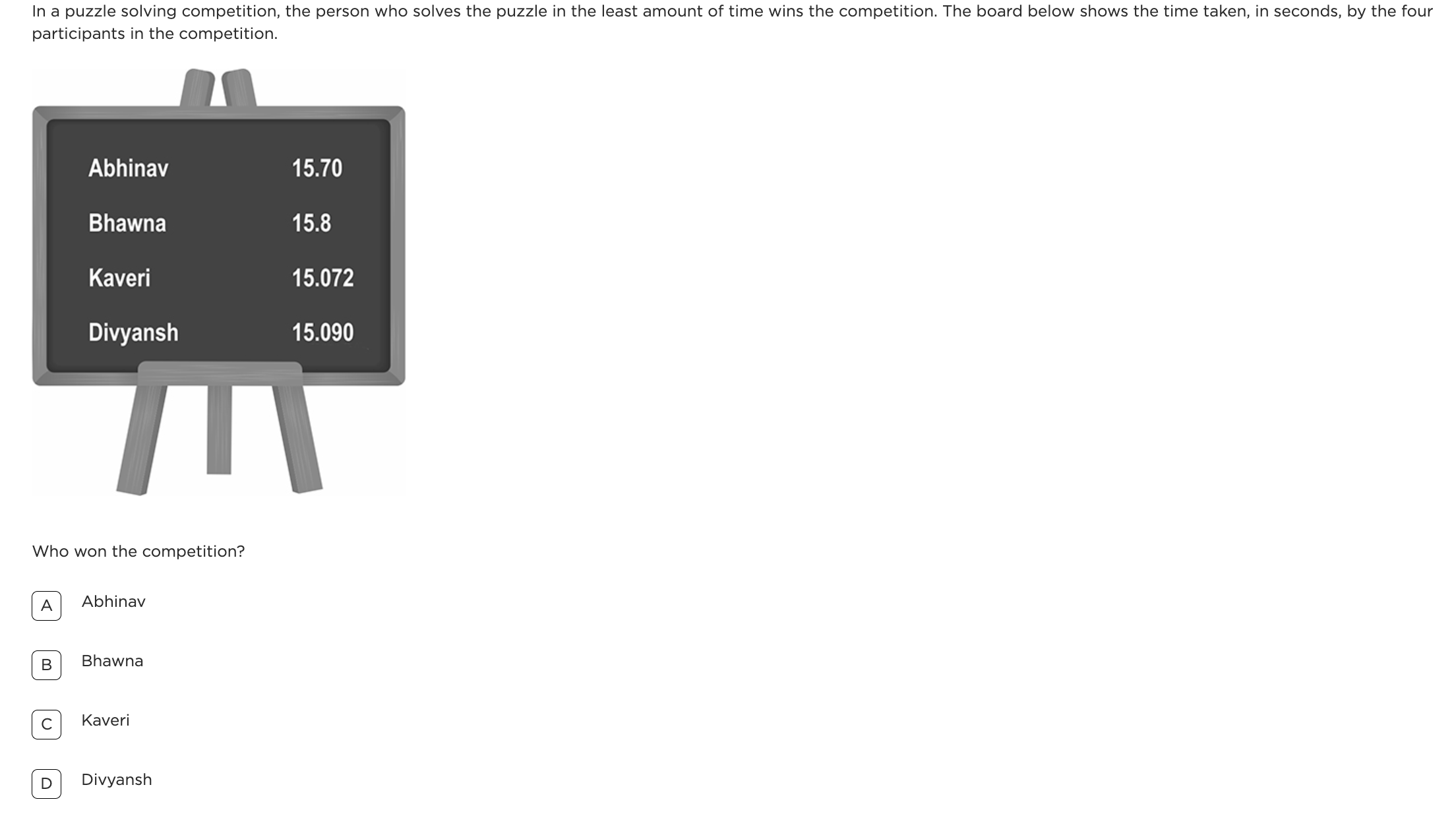

Ei Asset Question

|

Q

|

Identifying Misleading News Headlines | Grade 7 & 8 |

Why this matters

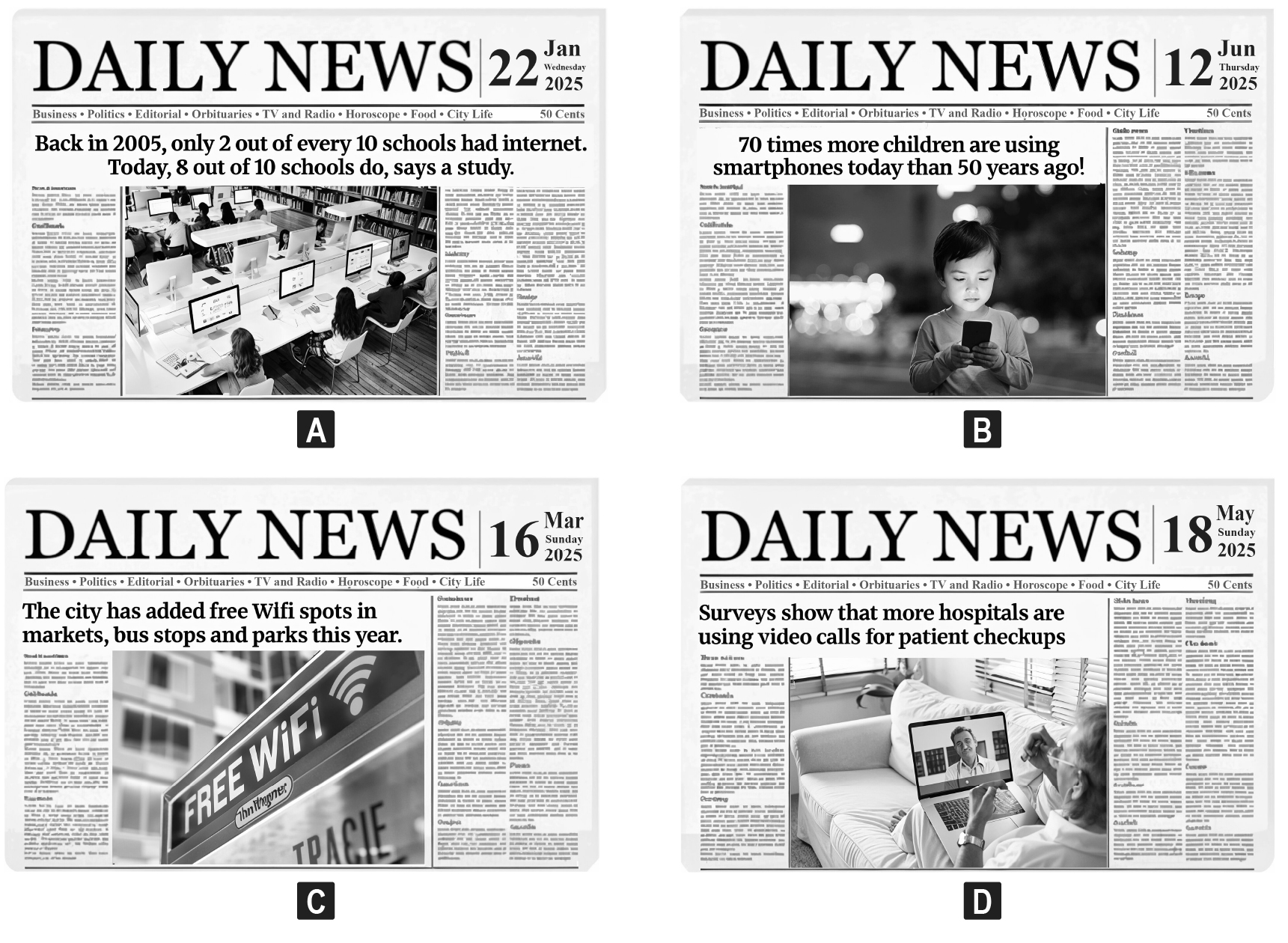

The article argues that children who can't question data and media will "simply accept whatever the algorithm tells them." This question tests exactly that instinct — the ability to pause, scrutinize and spot what looks plausible but isn't.

Look at the four headlines below.

Which one is MOST LIKELY to be FALSE news?

A |

Option A |

B |

Option B ✓ Correct Answer |

C |

Option C |

D |

Option D |

1,364

Grade 7 students attempted this question

1,082

Grade 8 students attempted this question

📊 Performance Data

Distribution of responses by option (Grade 7 vs Grade 8)

|

13% / 11% A |

25% / 32% B ✓ |

25% / 24% C |

37% / 31% D |

Grade 7 Grade 8

💡 Key Insight

Only 25% of Grade 7 and 32% of Grade 8 students identified the false headline, with the most common wrong answer being Option D. This highlights a significant gap in media literacy at exactly the age when news and forwarded content become part of daily life.

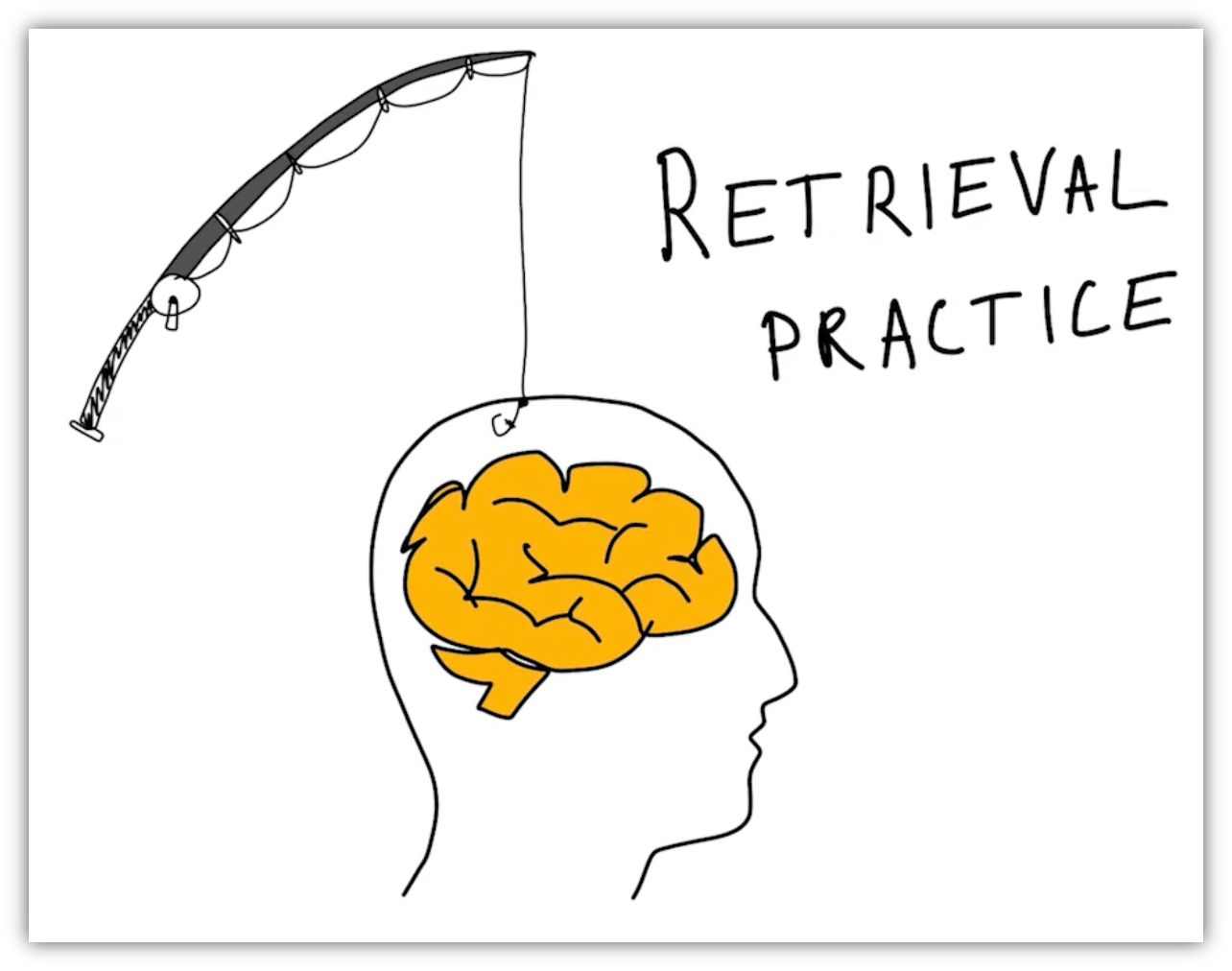

🔲 Module Spotlight — Mindspark AI & Digital Thinking

Mindspark Module · Critical Thinking

Fact Detective

The article makes a pointed observation: children who can't question what they see will "simply accept whatever the algorithm tells them." Advertisements are one of the oldest algorithms of all — designed, tested, and optimised to make you believe something and act on it. Fact Detective puts students inside that system. They're shown a real-world advertisement and must decide: is this a genuine claim backed by evidence, or persuasive language designed to make them buy? After choosing, an AI tutor generates follow-up questions tailored to their specific answer — not to correct them immediately, but to probe whether they actually understand the reasoning behind their choice.

Are these principles already part of your teaching toolkit?

We’d love to hear your story!

Enjoyed the read? Spread the word

Interested in being featured in our newsletter?

Feature Articles

Join Our Newsletter

Your monthly dose of education insights and innovations delivered to your inbox!

powered by Advanced iFrame